OpenSource의 세계는 위대하다. 그리고 알고 싶은게 너무 많다. [하기 출처]

Top Python Libraries for Data Science, Data Visualization & Machine Learning - KDnuggets

This article compiles the 38 top Python libraries for data science, data visualization & machine learning, as best determined by KDnuggets staff.

www.kdnuggets.com

Data

1. Apache Spark

Stars: 27600, Commits: 28197, Contributors: 1638

Apache Spark - A unified analytics engine for large-scale data processing

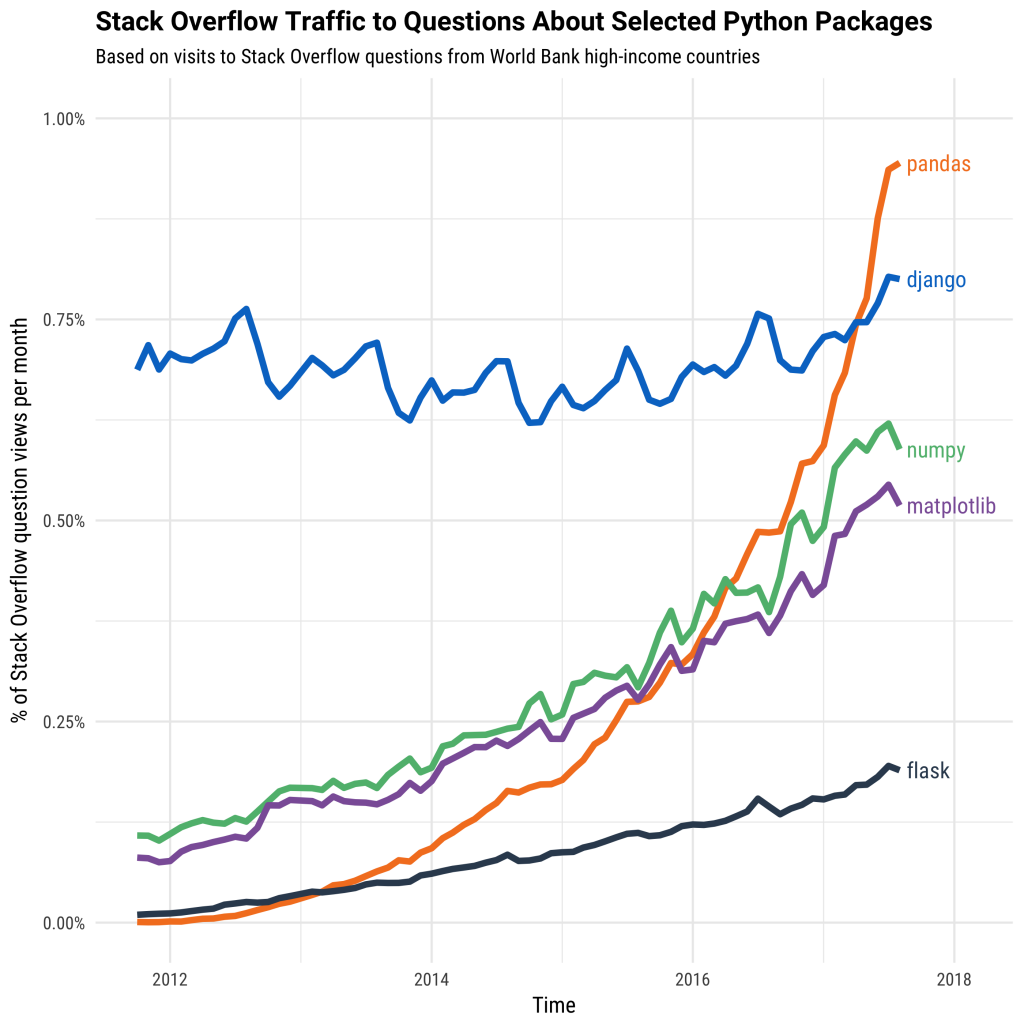

2. Pandas

Stars: 26800, Commits: 24300, Contributors: 2126

Pandas is a Python package that provides fast, flexible, and expressive data structures designed to make working with "relational" or "labeled" data both easy and intuitive. It aims to be the fundamental high-level building block for doing practical, real world data analysis in Python.

3. Dask

Stars: 7300, Commits: 6149, Contributors: 393

Parallel computing with task scheduling

Math

4. Scipy

Stars: 7500, Commits: 24247, Contributors: 914

SciPy (pronounced "Sigh Pie") is open-source software for mathematics, science, and engineering. It includes modules for statistics, optimization, integration, linear algebra, Fourier transforms, signal and image processing, ODE solvers, and more.

5. Numpy

Stars: 1500, Commits: 24266, Contributors: 1010

The fundamental package for scientific computing with Python.

Machine Learning

6. Scikit-Learn

Stars: 42500, Commits: 26162, Contributors: 1881

Scikit-learn is a Python module for machine learning built on top of SciPy and is distributed under the 3-Clause BSD license.

7. XGBoost

Stars: 19900, Commits: 5015, Contributors: 461

Scalable, Portable and Distributed Gradient Boosting (GBDT, GBRT or GBM) Library, for Python, R, Java, Scala, C++ and more. Runs on single machine, Hadoop, Spark, Flink and DataFlow

8. LightGBM

Stars: 11600, Commits: 2066, Contributors: 172

A fast, distributed, high performance gradient boosting (GBT, GBDT, GBRT, GBM or MART) framework based on decision tree algorithms, used for ranking, classification and many other machine learning tasks.

9. Catboost

Stars: 5400, Commits: 12936, Contributors: 188

A fast, scalable, high performance Gradient Boosting on Decision Trees library, used for ranking, classification, regression and other machine learning tasks for Python, R, Java, C++. Supports computation on CPU and GPU.

10. Dlib

Stars: 9500, Commits: 7868, Contributors: 146

Dlib is a modern C++ toolkit containing machine learning algorithms and tools for creating complex software in C++ to solve real world problems. Can be used with Python via dlib API

11. Annoy

Stars: 7700, Commits: 778, Contributors: 53

Approximate Nearest Neighbors in C++/Python optimized for memory usage and loading/saving to disk

12. H20ai

Stars: 500, Commits: 27894, Contributors: 137

Open Source Fast Scalable Machine Learning Platform For Smarter Applications: Deep Learning, Gradient Boosting & XGBoost, Random Forest, Generalized Linear Modeling (Logistic Regression, Elastic Net), K-Means, PCA, Stacked Ensembles, Automatic Machine Learning (AutoML), etc.

13. StatsModels

Stars: 5600, Commits: 13446, Contributors: 247

Statsmodels: statistical modeling and econometrics in Python

14. mlpack

Stars: 3400, Commits: 24575, Contributors: 190

mlpack is an intuitive, fast, and flexible C++ machine learning library with bindings to other languages

15. Pattern

Stars: 7600, Commits: 1434, Contributors: 20

Web mining module for Python, with tools for scraping, natural language processing, machine learning, network analysis and visualization.

16. Prophet

Stars: 11500, Commits: 595, Contributors: 106

Tool for producing high quality forecasts for time series data that has multiple seasonality with linear or non-linear growth.

Automated Machine Learning

17. TPOT

Stars: 7500, Commits: 2282, Contributors: 66

A Python Automated Machine Learning tool that optimizes machine learning pipelines using genetic programming.

18. auto-sklearn

Stars: 4100, Commits: 2343, Contributors: 52

auto-sklearn is an automated machine learning toolkit and a drop-in replacement for a scikit-learn estimator.

19. Hyperopt-sklearn

Stars: 1100, Commits: 188, Contributors: 18

Hyperopt-sklearn is Hyperopt-based model selection among machine learning algorithms in scikit-learn.

20. SMAC-3

Stars: 529, Commits: 1882, Contributors: 29

Sequential Model-based Algorithm Configuration

21. scikit-optimize

Stars: 1900, Commits: 1540, Contributors: 59

Scikit-Optimize, or skopt, is a simple and efficient library to minimize (very) expensive and noisy black-box functions. It implements several methods for sequential model-based optimization.

22. Nevergrad

Stars: 2700, Commits: 663, Contributors: 38

A Python toolbox for performing gradient-free optimization

23. Optuna

Stars: 3500, Commits: 7749, Contributors: 97

Optuna is an automatic hyperparameter optimization software framework, particularly designed for machine learning.

Data Visualization

24. Apache Superset

Stars: 30300, Commits: 5833, Contributors: 492

Apache Superset is a Data Visualization and Data Exploration Platform

25. Matplotlib

Stars: 12300, Commits: 36716, Contributors: 1002

Matplotlib is a comprehensive library for creating static, animated, and interactive visualizations in Python.

26. Plotly

Stars: 7900, Commits: 4604, Contributors: 137

Plotly.py is an interactive, open-source, and browser-based graphing library for Python

27. Seaborn

Stars: 7700, Commits: 2702, Contributors: 126

Seaborn is a Python visualization library based on matplotlib. It provides a high-level interface for drawing attractive statistical graphics.

28. folium

Stars: 4900, Commits: 1443, Contributors: 109

Folium builds on the data wrangling strengths of the Python ecosystem and the mapping strengths of the Leaflet.js library. Manipulate your data in Python, then visualize it in a Leaflet map via folium.

29. Bqplot

Stars: 2900, Commits: 3178, Contributors: 45

Bqplot is a 2-D visualization system for Jupyter, based on the constructs of the Grammar of Graphics.

30. VisPy

Stars: 2500, Commits: 6352, Contributors: 117

VisPy is a high-performance interactive 2D/3D data visualization library. VisPy leverages the computational power of modern Graphics Processing Units (GPUs) through the OpenGL library to display very large datasets. Applications of VisPy include:

31. PyQtgraph

Stars: 2200, Commits: 2200, Contributors: 142

Fast data visualization and GUI tools for scientific / engineering applications

32. Bokeh

Stars: 1400, Commits: 18726, Contributors: 467

Bokeh is an interactive visualization library for modern web browsers. It provides elegant, concise construction of versatile graphics, and affords high-performance interactivity over large or streaming datasets.

33. Altair

Stars: 600, Commits: 3031, Contributors: 106

Altair is a declarative statistical visualization library for Python. With Altair, you can spend more time understanding your data and its meaning.

Explanation & Exploration

34. eli5

Stars: 2200, Commits: 1198, Contributors: 15

A library for debugging/inspecting machine learning classifiers and explaining their predictions

35. LIME

Stars: 800, Commits: 501, Contributors: 41

Lime: Explaining the predictions of any machine learning classifier

36. SHAP

Stars: 10400, Commits: 1376, Contributors: 96

A game theoretic approach to explain the output of any machine learning model.

37. YellowBrick

Stars: 300, Commits: 825, Contributors: 92

Visual analysis and diagnostic tools to facilitate machine learning model selection.

38. pandas-profiling

Stars: 6200, Commits: 704, Contributors: 47

Create HTML profiling reports from pandas DataFrame objects

Top 10 Python Data Science Libraries by GitHub Contributors, Commits and Size (size of the circle)

Now, let’s get onto the list (GitHub figures correct as of November 16th, 2018):

1. pandas (Contributors – 1328, Commits – 18162, Stars – 16890)

“pandas is a Python package providing fast, flexible, and expressive data structures designed to make working with "relational" or "labeled" data both easy and intuitive. It aims to be the fundamental high-level building block for doing practical, real world data analysis in Python.”

2. Matplotlib (Contributors – 771, Commits – 27937, Stars – 8224)

“Matplotlib is a Python 2D plotting library which produces publication-quality figures in a variety of hardcopy formats and interactive environments across platforms. Matplotlib can be used in Python scripts, the Python and IPython shell (à la MATLAB or Mathematica), web application servers, and various graphical user interface toolkits.”

3. NumPy (Contributors – 708, Commits – 19241, Stars – 8666)

“NumPy is the fundamental package needed for scientific computing with Python. It provides a powerful N-dimensional array object, sophisticated (broadcasting) functions, tools for integrating C/C++ and Fortran code and useful linear algebra, Fourier transform, and random number capabilities.”

4. SciPy (Contributors – 670, Commits – 20080, Stars – 5096)

“SciPy (pronounced "Sigh Pie") is open-source software for mathematics, science, and engineering. It includes modules for statistics, optimization, integration, linear algebra, Fourier transforms, signal and image processing, ODE solvers, and more.”

5. Bokeh (Contributors - 325, Commits - 17365, Stars - 8439)

“Bokeh is an interactive visualization library for Python that enables beautiful and meaningful visual presentation of data in modern web browsers. With Bokeh, you can quickly and easily create interactive plots, dashboards, and data applications.”

6. Gensim (Contributors - 299, Commits - 3676, Stars - 8107)

“Gensim is a Python library for topic modelling, document indexing and similarity retrieval with large corpora. Target audience is the natural language processing (NLP) and information retrieval (IR) community.”

7. Scrapy (Contributors – 295, Commits – 6802, Stars – 30014)

“Scrapy is a fast high-level web crawling and web scraping framework, used to crawl websites and extract structured data from their pages. It can be used for a wide range of purposes, from data mining to monitoring and automated testing.”

8. StatsModels (Contributors – 164, Commits – 10896, Stars – 3383)

“Statsmodels is a Python package that provides a complement to scipy for statistical computations including descriptive statistics and estimation and inference for statistical models.”

9. plotly.ly (Contributors – 62, Commits – 3291, Stars – 4218)

“plotly.ly is an interactive, open-source, and browser-based graphing library for Python. Built on top of plotly.js, plotly.py is a high-level, declarative charting library. plotly.js ships with over 30 chart types, including scientific charts, 3D graphs, statistical charts, SVG maps, financial charts, and more.”

10. pydot (Contributors – 12, Commits – 169, Stars – 267)

“pydot is an interface to Graphviz, can parse and dump into the DOT language used by Graphviz and is written in pure Python.”

Top 13 Python Deep Learning Libraries, by Commits and Contributors. Circle size is proportional to number of stars.

Now, let’s get onto the list (GitHub figures correct as of October 23rd, 2018):

1. TensorFlow (Contributors – 1700, Commits – 42256, Stars – 112591)

“TensorFlow is an open source software library for numerical computation using data flow graphs. The graph nodes represent mathematical operations, while the graph edges represent the multidimensional data arrays (tensors) that flow between them. This flexible architecture enables you to deploy computation to one or more CPUs or GPUs in a desktop, server, or mobile device without rewriting code. “

2. PyTorch (Contributors – 806, Commits – 14022, Stars – 20243)

“PyTorch is a Python package that provides two high-level features:

- Tensor computation (like NumPy) with strong GPU acceleration

- Deep neural networks built on a tape-based autograd system

You can reuse your favorite Python packages such as NumPy, SciPy and Cython to extend PyTorch when needed.”

3. Apache MXNet (Contributors – 628, Commits – 8723, Stars – 15447)

“Apache MXNet (incubating) is a deep learning framework designed for both efficiency and flexibility. It allows you to mixsymbolic and imperative programming to maximize efficiency and productivity. At its core, MXNet contains a dynamic dependency scheduler that automatically parallelizes both symbolic and imperative operations on the fly.”

4. Theano (Contributors – 329, Commits – 28033, Stars – 8536)

“Theano is a Python library that allows you to define, optimize, and evaluate mathematical expressions involving multi-dimensional arrays efficiently. It can use GPUs and perform efficient symbolic differentiation.”

5. Caffe (Contributors – 270, Commits – 4152, Stars – 25927)

“Caffe is a deep learning framework made with expression, speed, and modularity in mind. It is developed by Berkeley AI Research (BAIR)/The Berkeley Vision and Learning Center (BVLC) and community contributors.”

6. fast.ai (Contributors – 226, Commits – 2237, Stars – 8872)

“The fastai library simplifies training fast and accurate neural nets using modern best practices. See the fastai website to get started. The library is based on research in to deep learning best practices undertaken at fast.ai, and includes "out of the box" support for vision, text, tabular, and collab (collaborative filtering) models.”

7. CNTK (Contributors – 189, Commits – 15979, Stars – 15281)

“The Microsoft Cognitive Toolkit (https://cntk.ai) is a unified deep learning toolkit that describes neural networks as a series of computational steps via a directed graph. In this directed graph, leaf nodes represent input values or network parameters, while other nodes represent matrix operations upon their inputs. CNTK allows users to easily realize and combine popular model types such as feed-forward DNNs, convolutional nets (CNNs), and recurrent networks (RNNs/LSTMs).”

8. TFLearn (Contributors – 118, Commits – 599, Stars – 8632)

“TFlearn is a modular and transparent deep learning library built on top of Tensorflow. It was designed to provide a higher-level API to TensorFlow in order to facilitate and speed-up experimentations, while remaining fully transparent and compatible with it.”

9. Lasagne (Contributors – 64, Commits – 1157, Stars – 3534)

“Lasagne is a lightweight library to build and train neural networks in Theano. It supports feed-forward networks such as Convolutional Neural Networks (CNNs), recurrent networks including Long Short-Term Memory (LSTM), and any combination thereof.”

10. nolearn (Contributors – 14, Commits – 389, Stars – 909)

“nolearn contains a number of wrappers and abstractions around existing neural network libraries, most notably Lasagne, along with a few machine learning utility modules. All code is written to be compatible with scikit-learn.”

11. Elephas (Contributors – 13, Commits – 249, Stars – 1046)

“Elephas is an extension of Keras, which allows you to run distributed deep learning models at scale with Spark. Elephas currently supports a number of applications, including:

- Data-parallel training of deep learning models

- Distributed hyper-parameter optimization

- Distributed training of ensemble models”

12. spark-deep-learning (Contributors – 12, Commits – 83, Stars – 1131)

“Deep Learning Pipelines provides high-level APIs for scalable deep learning in Python with Apache Spark. The library comes from Databricks and leverages Spark for its two strongest facets:

- In the spirit of Spark and Spark MLlib, it provides easy-to-use APIs that enable deep learning in very few lines of code.

- It uses Spark's powerful distributed engine to scale out deep learning on massive datasets.”

13. Distributed Keras (Contributors – 5, Commits – 1125, Stars – 523)

“Distributed Keras is a distributed deep learning framework built on top of Apache Spark and Keras, with a focus on "state-of-the-art" distributed optimization algorithms. We designed the framework in such a way that a new distributed optimizer could be implemented with ease, thus enabling a person to focus on research.”

'Python' 카테고리의 다른 글

| Python으로 코호트 분석(Cohort Analysis)하고 Pandas 명령어 시행 (0) | 2021.05.07 |

|---|---|

| 입문자 위한 Python 기본 안내서 [2] : 제어문에 대해 (0) | 2021.04.27 |

| 입문자 위한 Python 기본 안내서 [1] : 자료형에 대해 (0) | 2021.04.27 |

| [실습] 매일 경제 기사 Python 크롤링 후 Dataframe에 넣은 후 CSV에 저장하기 (0) | 2021.04.26 |

| [TIP] Jupyter에서 다른 폴더의 library, import 호출하기 (0) | 2020.11.16 |

| Python에서의 lambda(람다) Function의 사용 (1) | 2020.11.09 |

| Pycharm과 Jupyter Notebook 연결하기 (0) | 2020.11.07 |

| [Python] 반복문의 사용 Range, Enumerate, for in loop (0) | 2020.10.10 |